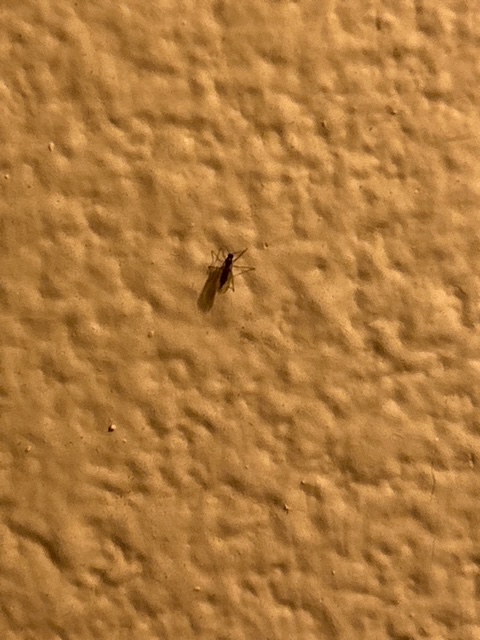

In the news here at the beginning of October, we’ve been hearing about the tiny flying, biting insects we call no-see-ums. Minute pirate bugs and biting midges have been flushed from the fields by the harvesters and are looking for a warm place to winter. They’re so small (1 to 3 millimeters long) that you can’t really see them. That would be why we call them no-see-ums. There are other insects, too, that are looking for a warm safe place to overwinter. We’ve had a bit of an invasion of them recently. The ones we have aren’t biting. They’re just squeezing in through the window screens and congregating on surfaces. Naturally we wanted to know what they were. So we got out the iPhone with its handy dandy insect identification app and took a picture. Confidently, boldly, the app returned a name and description: buzzer midge AKA green bay fly, lake midge, and Winnebago lake midge.

Well, maybe, but with all that lake in its name, it sounds like it wants to be near water, which we are not. Let’s try again. Another equally confident response: ghost ant. Ants and flies are not the same. H’m. Try again.

Rice weevil. Weevil? A weevil is a beetle, not a fly or an ant. Obviously something is wrong here. Let’s try one more time. Maybe we’ll at least get a match. Lovebug, a species of March fly. This isn’t working. The AI powering these identifications was making things up rather than saying “I don’t know.” The first three aren’t even closely related.

I’ve been using AI extensively over the past year or so for tasks unrelated to this blog, and this gives me pause. Recently on a trip to Alaska, I used it to identify a flowering plant. It came back with what turned out to be the wrong plant. It said it was a loosestrife when it was really a fireweed. I used that wrong answer in my travelogue and had to publish a correction when it was called to my attention.

I should know better than to expect AI to get it right every time. Google Lens told me that I was looking at a meteorite when human eyes declared it to be a jacaranda tree in full bloom. How it got a meteorite out of a jacaranda tree is beyond me.

And yet, AI has given me access to information far beyond my experience or expectation. I use some apps now that are specifically designed for blind people to read text and digital displays, describe objects and scenes, and locate specific objects.

I use four apps that will describe, in whatever level of detail I need, a photograph or a scene. Using BeMyEyes, SeeingAI, my Meta Ray-Ban glasses, or PictureSmart, I can ask clarifying questions which AI will answer for me.

This makes websites and photos much more available to me. But it also introduces a level of uncertainty, realizing that these apps are all based on various AI engines, and will give somewhat different and occasionally opposite responses.

AI is getting better, and for the most part I’ve been able to corroborate the information it provides. And that’s the key—corroborate with trusted sources rather than rely solely on the AI response.

So how to identify this tiny insect and get it right. Good question. I could send it to the labs at Iowa State for an official identification. Meanwhile, perhaps we’ll just learn about all of these species. They’re probably all here somewhere on Owl Acres.

Photo by Author. Alt Text: A tiny black insect clings to a wall. The camera can’t zoom in close enough to get much detail. We submitted the photo to an AI-powered insect identification app to see what it might tell us. We were not disappointed.